If you recall, the last couple of months have brought impressive growth to Macgasm, and with that, impressive server load. If you follow us on Twitter, you’ll recall the times right after we published a post where the site would slow to a crawl, or even flat out die because of all the traffic.

Recently, we just got through WWDC 2011 without so much as a hiccup in accessibility on the site (as far as I can tell. If you had a problem, PLEASE, let us know.) So, how’d we pull such awesomeness off? I’d love to tell you all about it, so sysadmins and musicians alike, read on to find out a little more about behind the scenes of Macgasm.

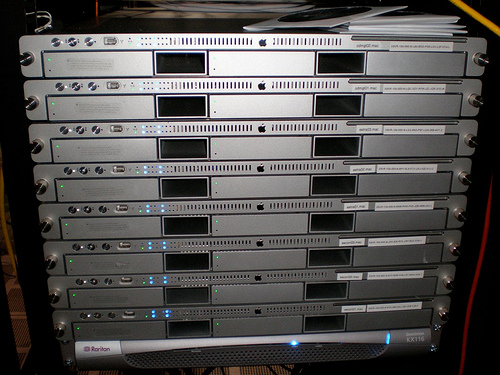

Web Server

It all starts with the web server. As for physical hardware, I don’t really know what we’re running on. We’re on the Rackspace Cloud, see? We have two boxes, one with 1GB of RAM running the database and another with 4GB of RAM running the web server and PHP instances. Both are running Ubuntu Server 10.10.

We were running Apache 2.2 on our previous web host, and that was the initial setup on the new boxes. We originally had three boxes with 512 MB of RAM each behind a load balancer. BUT, when we made the initial switchover, in the middle of the night, the traffic proceeded to crash all three servers. Yeah, don’t know how that happened. We tried 1GB of RAM, and 2GB of RAM, and still, we’d run out of memory and start disk swapping like mad, and soon the box would completely freeze.

Enter nginx, the Russian wonder. The event-driven beast. The scary black hole web developers tiptoe into when they get curious. I hear once you go in, you hear voices in your head from that day forth, every time you use Apache.

ANYWAY, after reading up briefly on nginx, and how to configure it as a reverse proxy to PHP-FastCGI, I gave it a go on a 1GB box, with double the weight of the other nodes behind the load balancer, since it had double the RAM. It took the load with RAM to spare. So, I tried 4x the weight. Didn’t even break a sweat. I removed the other Apache nodes, and it took all the load itself! Daytime load? Nothing a little more RAM and a second node can’t handle.

Database and Caching

The database server is a simple 1GB instance, MySQL 5 installed and running like normal. The magic lies in leveraging the two together with some Memcached action. With 256 MB of Memcached space, WordPress Total Cache really shines. See, the default disk cache setting works well enough, but RAM loads faster than even the fastest disks, and when you have extra RAM sitting around, Memcached is the perfect tool to use it. Once that’s set up, a single, nginx powered box with 4GB of RAM is more than enough to handle our strongest traffic spikes.

CDN

The final ingredient to our not-so-secret sauce is a CDN. We’ve been leveraging two tools for this, Rackspace Cloud Files and Cloudflare. Cloud Files is GREAT for us because it allows us to keep everything local. Since we can send files to it without leaving the datacenter, and they handle distribution for us for no charge, we only end up paying for the outbound bandwidth (which can cost a pretty penny, let me tell you.)

After that comes Cloudflare. Cloudflare is a unique, free DNS based CDN and security service. Basically, you give them control of your DNS by pointing your domain at their nameservers. They host your DNS for free, and let you manage it with their AWESOME control panel. Plus, they check all your visitors against the HTTP Blacklist to keep comment spammers and other risky visitors away from your site, before they can even bother your servers and waste time and bandwidth. Pretty dang cool, if you ask me. Josh has had some issues with it, and it may be partially to blame for some of the ad serving issues we’ve been seeing, but tests are inconclusive. :)

So, my friend, what have you learned? Didn’t they tell you that you’d be quizzed at the end? Come on now, start typing in the comments… What you learned, what you liked, what you don’t understand… We’d love to answer all your questions about our setup and how it evolved into what powers Macgasm today. It’s definitely been an adventure for us, and we’re relying on you to continue it!